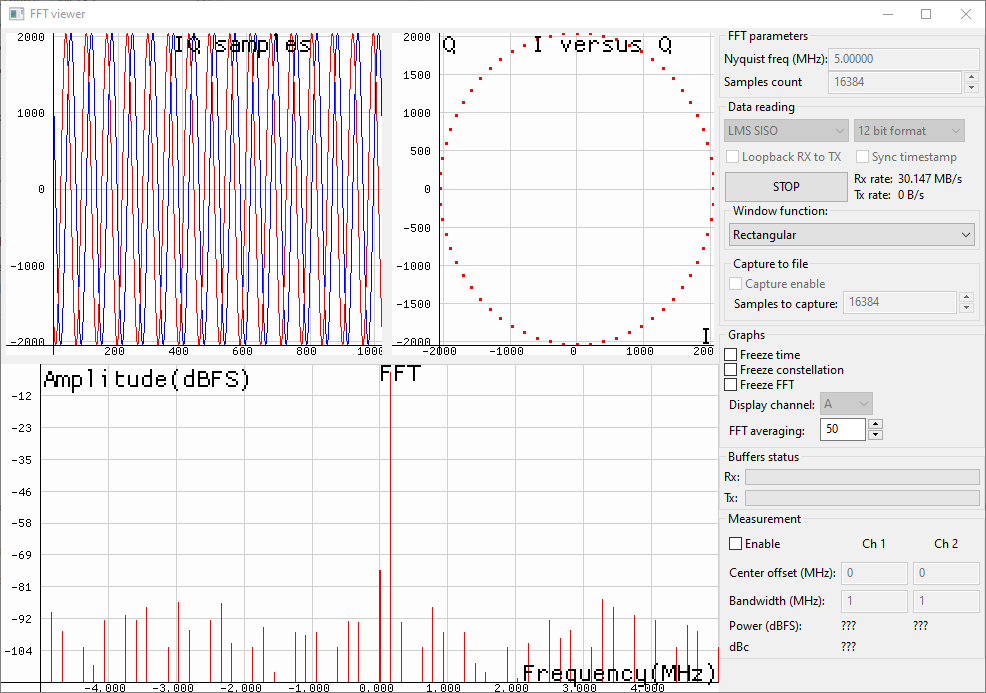

I’m running a test program that generates a 5.4 kHz wide (after pulse shaping and filtering) QPSK signal, applies pulse shaping and a channel filter, then interpolates it to 108 ksps.

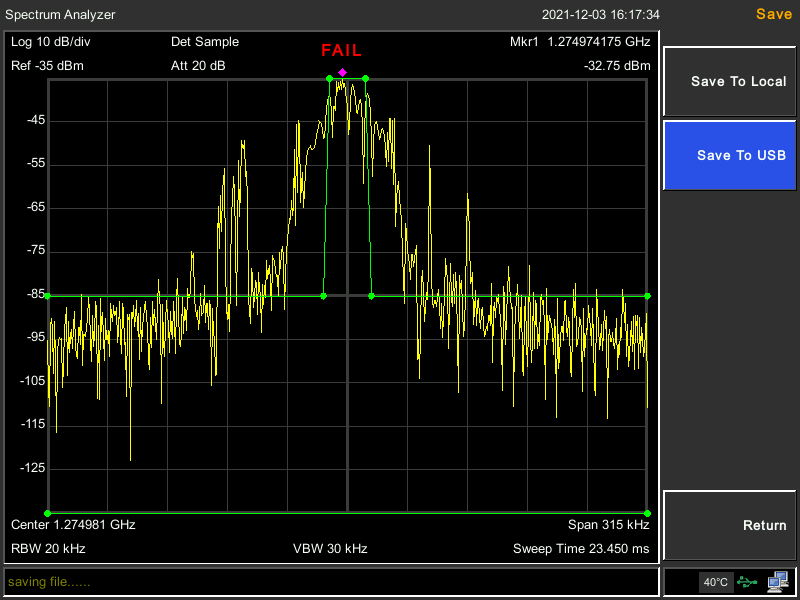

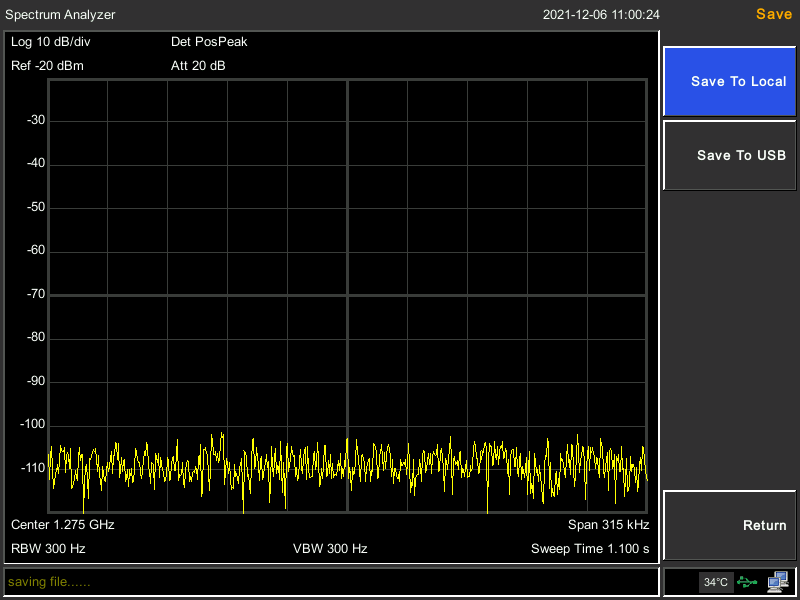

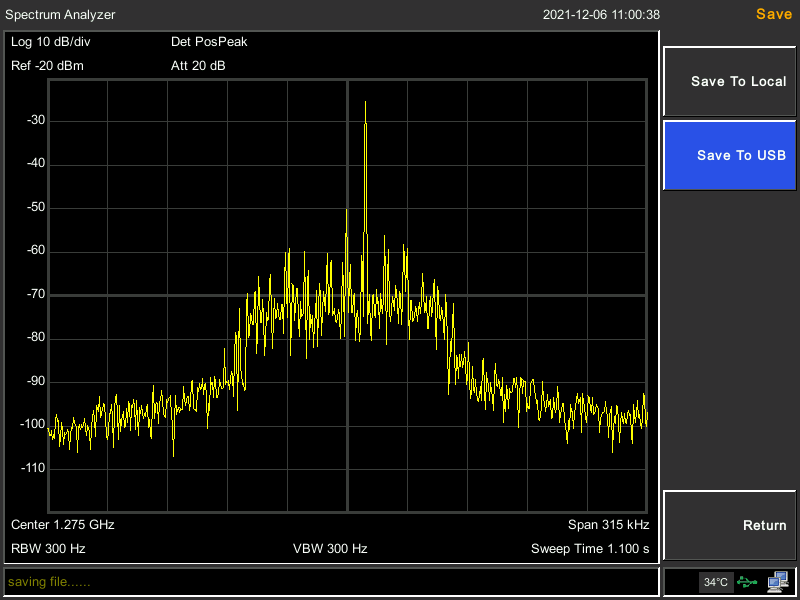

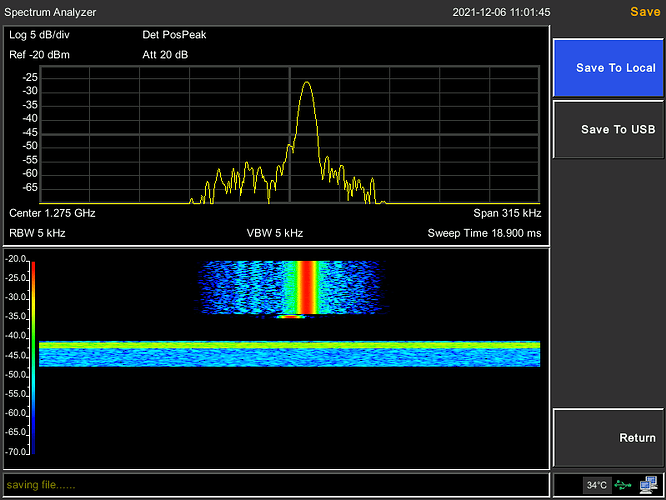

On the spectrum analyzer, I see the TX signal jumping up and down and causing a lot of spurs across the 108 kHz and maybe a bit beyond. These spurs change over time rather rapidly.

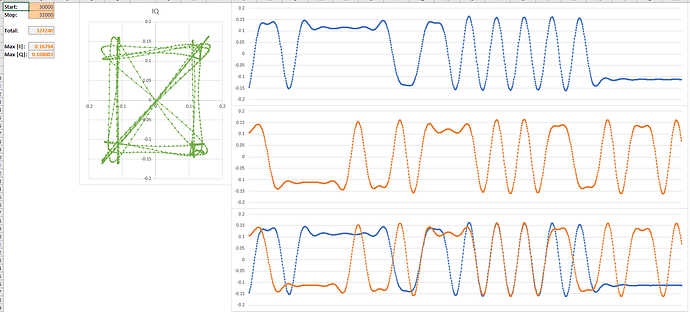

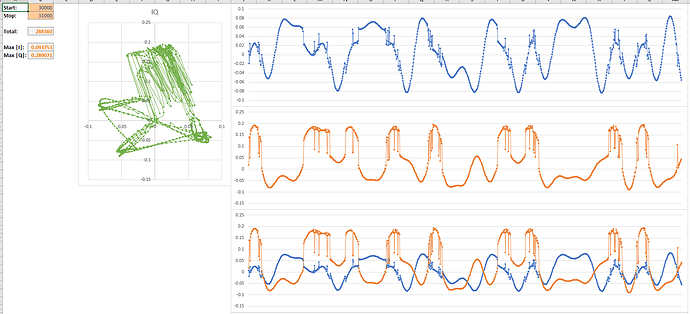

In order to rule out issues with the spectrum analyzer, I connected the TX port to the RX port of the LimeSDR-USB through an attenuator. I captured the RX samples into a file just after the call to lime::Streamer::Read(), and did the same for the TX samples just before the call to lime::Streamer::Write(). These two calls merely pull and push in a thread-safe way data in the stream FIFO, and thus they run separately in their own threads.

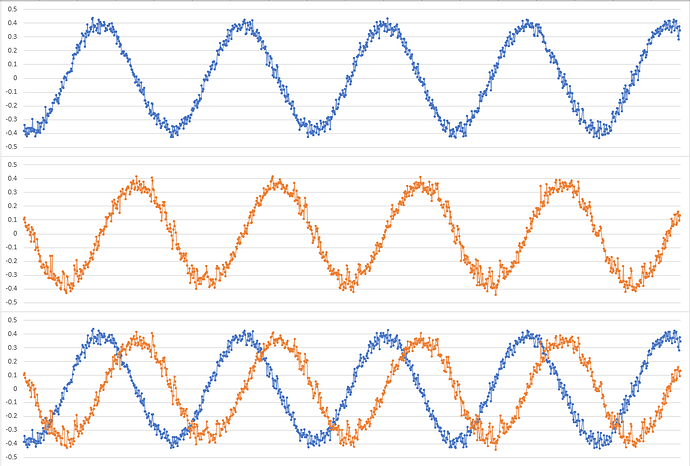

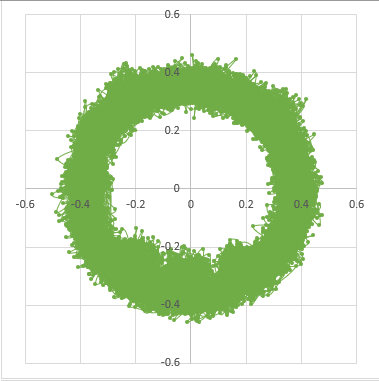

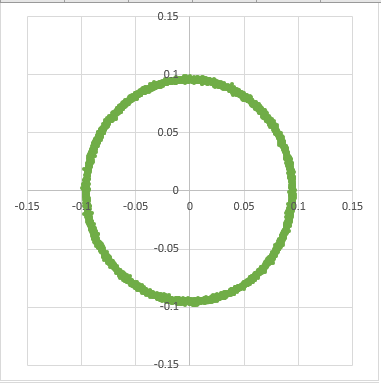

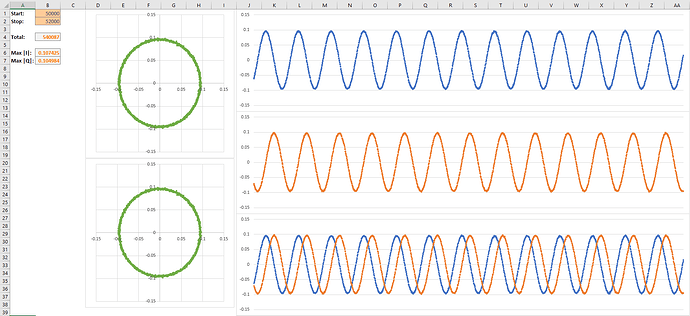

While I cannot provide the code used, I can provide the raw IQ (random QPSK data). When I look at the data, the TX side looks clean and perfect. There are no stream underruns, overruns, or packets dropped and the transfer rate reported matches 4 bytes per sample at 108 ksps.

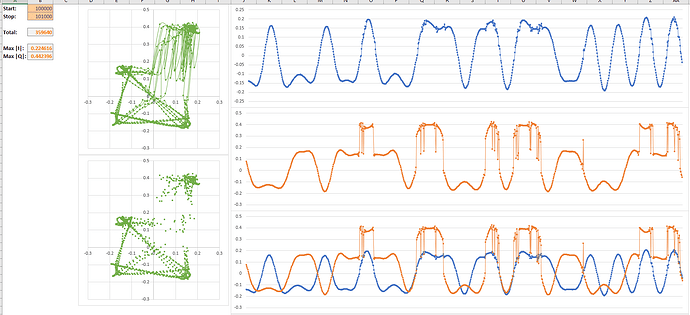

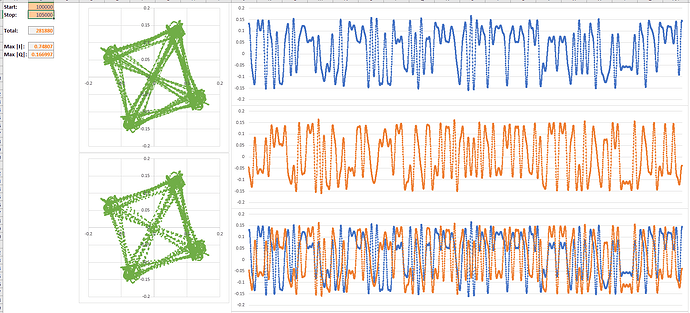

On the RX side, I see the same strange behaviour as I could see on the spectrum analyzer. Not only does the signal die for short periods of time, but it seems to have added spurs and jumps in the signal timing. I also believe the RX is working perfectly as I have used it without any issues to receive many different signals.

I’ve tried playing around with a lot of different configuration options, but this does not seem to be a configuration nor a streaming issue from my software. I believe the issue originates from the SDR hardware itself. I have not tried to power the device externally. Changing the output power and setting all gains to minimum did not impact the behaviour. I’m running the latest release version of the LMS API and the firmware and gateware are up to date on my SDR device.

What else could I try that could make a difference? Do I simply have a defective device?

Thanks,

JD

Edit: By the way, yes I have used Excel as an IQ viewer, and I’m not ashamed.